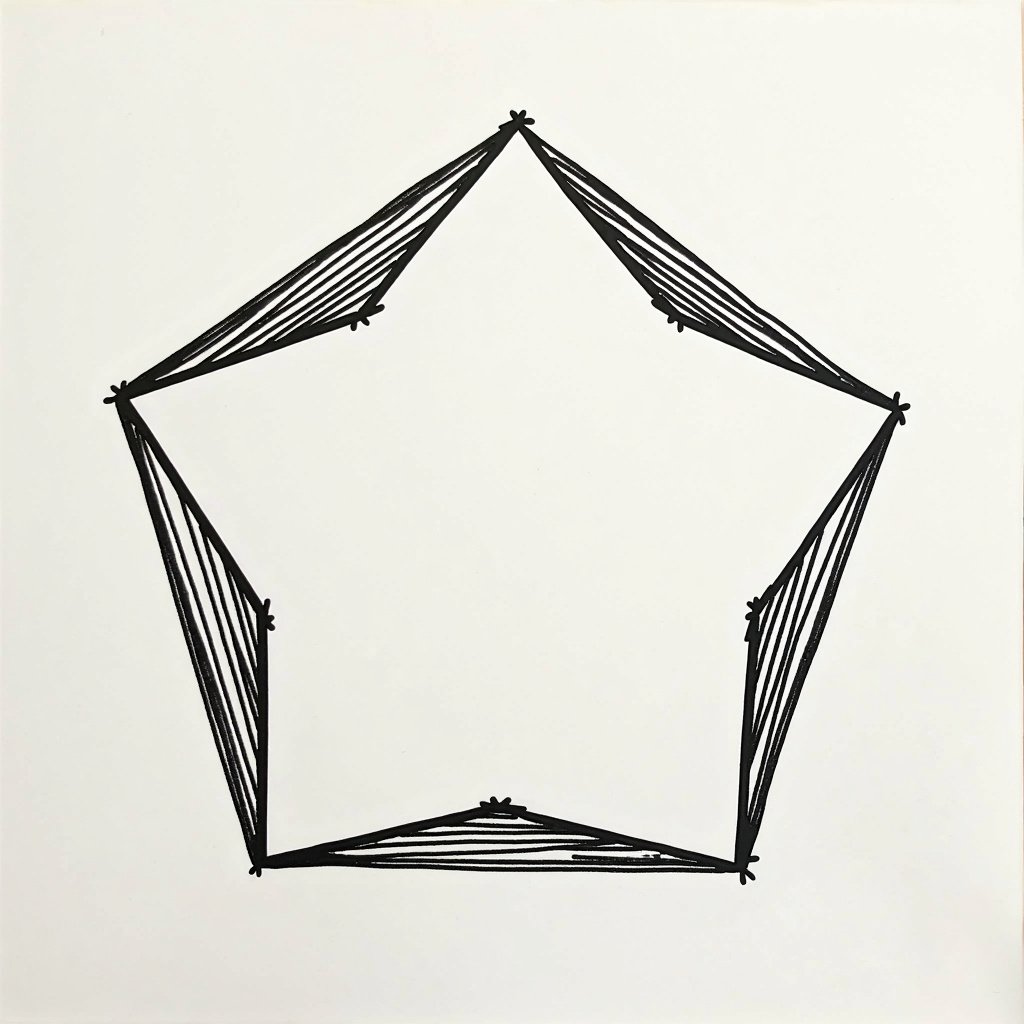

I don’t really know, but I think it’s mostly to do with pentagons being under-represented in the world in general. That and the specific way that a pentagon breaks symmetry. But it’s not completely impossible to get em to make one. After a lot of futzing around, o1 wrote this prompt, which seems to work 50% of the time with FLUX [pro]:

An illustration of a regular pentagon shape: a flat, two-dimensional geometric figure with five equal straight sides and five equal angles, drawn with black lines on a white background, centered in the image.

deleted by creator